Since I started writing TheWineKnowLog© in March of last year, I have purposely refrained from writing very much about the many issues, business and political, that appear to have enraged, engaged, and otherwise piqued the pundits and business leaders and glitterati of the wine business.

I took this approach for two main reasons. First, I believe that most of you, readers and followers of TWKL, really are not that much interested in many of the ins and outs of the wine business, the political aspects involving commerce and regulation, etc. Second, I have many fellow friends and colleagues, journalists, producers, and critics who do an excellent job of covering these issues such that adding my voice would just become background noise, and a distraction from what I prefer to concentrate on. Others cover the political/economic/business side of the wine biz better than me.

If you want insightful commentary, often amusing writing and solid reporting on those aspects of the wine business, please read the work of such fine writers as W. Blake Gray on the Wine-Searcher.com site; Tom Wark who writes Fermentation News also on Substack (like me); Oliver Styles on several platforms including Wine-Searcher.com & https://www.internationalwinechallenge.com/Canopy; my fellow MW, Dr Liz Thach MW in https://www.forbes.com/sites/lizthach & her own website, https://lizthachmw.com; Simon Woolf who writes The Morning Clare; Mike Veseth, theWineEconomist.com; Robert Joseph, writing for meiningers-international.com. & almost any writer in Winebusiness.com. There are others of course, but these are among my favorites.

Yet two recent articles by Oliver Styles (https://www.wine-searcher.com/m/2024/09/putting-an-end-to-perfect-wine-scores?_bhlid=c53369eb902efad4ba7c88688f065c1aacf97428) and Simon Woolf (https://themorningclaret.com/P/are-wine-competitions-a-load-of-rubbish) provoked today’s commentary. They touched on subjects that are germane, I believe, to what many readers who crave information and education about wine, as well as what wine lovers, wine critics and certainly wine producers and business people are concerned about most. They cross over between the business and aesthetic sides of our delightful pursuit of the grape. And for many in the trade, these subjects ‘raise the shields’ more than almost any other aspect of wine except “WTF is Natural Wine?”

I speak of Wine Competition judging, wine writing, Point scores (nothing less than perfection matters!) and a lack of critical thinking (wisdom?) when evaluating wines. This leads additionally to thinking of wine evaluation as a test or competition instead of a means to provide reasonable, informed judgement about a wine’s quality and style that attests to the craftsmanship of the producer.

Judges hard at work evaluating sparkling wines at the International Wine Challenge in London, 2010 (JBMW)

From a reader’s perspective, which I try to consider when writing The WineKnowLog, who actually has the competency to ‘judge’ wine when there no longer appears to be a baseline or standard for doing so based upon experience, wide-spread knowledge and/or a lack of independent, critical thinking? A number now stands in for an informed opinion and evaluation of a wine, which anyone can offer, no matter their inexperience. There is little room today for taking a contrary viewpoint that doesn’t proclaim virtually all wines ‘fantastic’ or takes exception to ‘received’ wisdom about a wine, or wine region or producer.

As Mr Styles writes in the above cited article: “…my main point: what kind of wine culture are we wine writers promoting if we're not prepared to go out on a limb? What happens if we're not prepared to make mistakes (no speech marks there – make genuine mistakes in rating a wine) and publish them?”

What is it about wine criticism that has gone the opposite direction from, for example, film, music or art criticism? While Critics will list their 10 favorite movies of the year, they don’t use a 100 point scoring system. Can you imagine an art critic saying that Rembrandt’s self portrait is only worth 95 points, whereas Van Gogh’s Starry night is a perfect 100?

If the state of current wine judging and writing (including blogs, ‘influencers’), let alone how retailers and others sell & market wine, then I suggest there is now a standard approach; one of ‘Groupthink’ that cares solely about how many points out of 100 a wine attains, thereby defining ‘quality’ without regard to the competency and experience of the writer and effectively without regard to where the wine is from. Collecting only 100 point wines, no matter who awards them, now is a weird, machismo competition among a certain group of (almost all male, middle-aged) wine collectors, usually with no intention of drinking them, but ‘awesome’ for bragging rights.

Mr Styles sums up this problem further:

“Sure, we can debate whether or not a wine critic can give a 100-point, perfect, score for a wine. Profile-boost or genuine assessment, take your pick. But perhaps we should look at a far greater problem: perfect scorers.

Because there are no more accidents, no more outrageous personality statements, no more pannings of top names. By and large, blind wine tasting has been completely dropped from the repertoire of the wine critic. Very few publications do so – and I’m not counting the ones that taste wines blind and then reassess their score once the wine has been revealed.”

Whew! that is a scorching indictment with which I only partially disagree-the best wine competitions judge wines blindly, and do not revise the results afterwards, except only by a select panel of judges re-examining borderline panel marks. I have been one of those select judges at the International Wine Challenge among other judgings, and can say that the original results generally are not significantly changed. When it is changed, think of it as I do when peer-reviewing an article for publication where the reviewers are acting as ‘fact-checkers’ to make sure that the authors (judges) didn’t leave out something important or mis-interpreted the data.

Looking at Wine Competitions, furthermore, Mr Woolf notes another critical point that needs consideration. “But it’s not simply about sales. For many wineries, gaining awards is about kudos. It’s about putting themselves out there and benchmarking performance against their competitors.” This is quite important to understand. Competitions, as I noted above, are only as good as the variety of entries submitted and their number, AND the competency of the judges.

This is a worthy goal and an important aspect of wine judgings. Yet even with large, inclusive tastings, few ‘prestige’ wineries submit their wines, fearful of being shown up by less exalted competitors. Moreover, virtually no competitions (or for that matter, few wine publications) are willing to spend their own money to go out and buy significant wines for comparative evaluation. To my knowledge, in the US only one competition, the Orange County (CA) Commercial Wine Competition, will actually go out and purchase wines it feels should be included in the judging performed only by professional winemakers or owners.

Of course, Wine competitions are expected to generate medals; wineries pay money for submitting their wines, as much as $150 or more per entry. But the value of that Medal to the winemaker varies. Even though around 80% of the wines submitted for the Decanter World Wine Awards (DWWA), the world’s largest (~18,000 wines last year) in awards (disclosure-I have judged DWWA several time), the overall expertise and experience of its Judges suggest that the awarded wines are worthwhile. As Mr Woolf notes: “DWWA has been criticised for awarding too many medals (up to 80% of the entries get an award), but I’m not honestly sure if artificially capping the number of medals is a better approach.”

A more selective judging such as those sponsored by the OIV (Office Internationale de Vin et des Vignes) mandate that no more than 30% of the wines can receive a medal, but my experience with judging them is a lesser quality of judges, a dubious judging system, and a lack of interest in submissions from more prestigious or ‘classic’ areas. But I haven’t judged an OIV Competition in several years, so things may have changed, hopefully for the better.

PERSONAL EXPERIENCE & KNOWLEDGE MATTERS

I have been active in the wine business for over 50 years. i have developed an organized system for tasting based up specific training (the MW) experience, learning from older mentors, academic education and tasting thousands of wines in many regions of the world. I choose to judge competitions only where I see that other judges have reasonably similar backgrounds and appropriate experience. Fortunately, most of the major competitions, as Mr Woolf points out, do just that.

Mr Woolf admits his ignorance of major wine judgings in the US as he has not participated in many of them. I have. There are only a handful anymore that I would feel ‘comfortable’ judging at, excepting those ‘regional’ or specialty wine judgings which are localized and prioritize a mostly familiar set of judges who know the terrain better.

What I object to about most Judgings here in the US (elsewhere as well, perhaps), and Mr Woolf, Mr Styles, and other judges might agree with but are hesitant to express, is that, unlike the excellent Australian Show Judging System, judgings generally are not based on a system whereby all judges evaluate to the same scale, based upon specific standards for each medal level, and with the criteria for marking each level is established from the start.

In Australia’s system which I strongly approve of, Judges are carefully vetted, judges in-training are assigned to each panel and judges are briefed on any particular issues/characteristics to consider for a particular type of wines. Aussie Show Judgings are organized to guide consumers towards the best and most typical wines, provide feedback to the producers about where their wines stand, and clearly, point out to the world the overall quality of Australia’s wines.

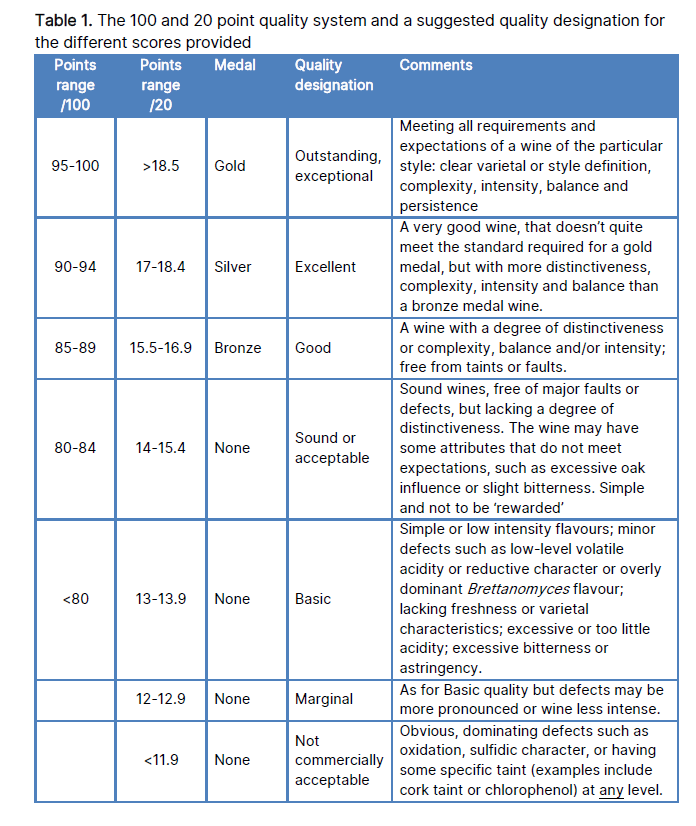

The Australian Wine Research Institute (AWRI) publishes the manual that governs how Wine Judgings are organized (AWRI Advanced Wine Assessment Course Website: hwww.awri.com.au) and wines evaluated. You may not use a 20 point scale evaluation system (I continue to do so, unofficially), but the Aussie Show System tells judges what they should be evaluating for a given flight (Semillon, Oak Aged Shiraz, etc), what point spread at a given medal level should be given and what the standards are for given said medal and much more. (see chart below from the AWRI manual).

(Courtesy AWRI Advanced Wine Assessment Course)

I wish ALL Wine competitions insisted on a standardized basis for judging such as this. The rules insure as much as is possible an objective evaluation for so subjective a product as wine. Scores and medals should represent specific standards for wine typicity, balance, length, intensity and complexity/concentration (BLIC). This approach still leaves room for judges’ subjective appraisal of style, yet in theory offers consumers and the wineries themselves assurance that the wines are evaluated from the same point of view and scale.

I distrust, as consumers should too, the results from smaller competitions who do not seek out competent experienced judges and are only too willing to engage new and inexperienced people in order to establish some sort of recognition in the market place by awarding hundreds of medals.

With that said, I firmly believe, as does Mr Woolf, in the efficacy of well organized, competently staffed and inclusive wine judgings. If four individuals of different backgrounds, reasonably similar experience and knowledge can agree that Wine B merits a Silver Medal (fine to excellent), I suggest this is a more accurate appraisal of wine quality than that of an individual reviewer, though of course there are exceptions, always (Yours truly included :))

As he writes in his article, Mr Woolf makes the point that

“Competitions are a bit like the wines themselves. They vary hugely in terms of size, quality and influence. None of them are trivial however. A huge amount of effort and thought goes into their processes. Whether you value the outcome will depend on your personal purchasing habits and wine preferences, but in all cases multiple wine professionals tasted, swirled, cogitated and sweated over the results.”

THE POINT IS…

Wine critics and the overwhelming use of the 100 point scale should be viewed critically and skeptically. Personally, I have always considered it BS, ever since Robert Parker’s The Wine Advocate started using it around 1980, followed by The Wine Spectator. Now almost all wine reviewers have swallowed the Kool-Aid™ using it. The entire wine industry structure is consumed by Points, from the retailer posting shelf talkers with Point scores to distributors buying only wines that have high scores.

Today’s wine writers are guilty too, as Mr Styles pertinently suggests:

“The sad thing here is that this is now well-established. It is now impossible (if it ever was) to imagine a major critic of any stripe turning up in Burgundy to conclude that Pinot Noir is insipid Ribena, or the more imaginable (but equally unlikely) scenario that the latest release of Lafite really wasn't that good. This overlaps a little with my previous examination of reputation and wine estates, but I think that having no "mistakes" when it comes to a wine taster's ratings is a real problem.

Firstly, there is very little room for personality any more. There are fewer and fewer disagreements. There are no debates or discussions – unless you include a sly look over the shoulder to say "well, I was 97 points on that – no way it was 95".

In my earlier career, I wrote on Italian wines for a few years in the mid-1990’s for Steven Tanzer’s esteemed International Wine Cellar publication, now part of the fine Vinous publication group. One of my big reviews of Tuscan wines at the time included some of the region’s reputed best, like Antinori’s Tignanello, Ornellaia & the esteemed Sassicaia. In July, 1998 I wrote that something was amiss with this normally fine wine, Vintage 1995, noting that after three separate tastings, the wine simply didn’t deliver. I think I rated it at the time probably in the mid-80’s. This was not appreciated. Pretty much every other critic gave it high marks.

But Mr Tanzer to his credit didn’t ask me to change my remarks or evaluation, though I suspect the powers that be at the winery might have sent him a message of approbation! Today’s writers or critics, retailers and avid wine lovers would be loathe to condemn a bottle of such magnitude for fear of being ostracized from visiting the property for tasting, or losing their allocation of the next vintage to their store. Access appears more important today than accuracy and honest opinion.

Come on, folks! The 100 point marking system comes from academia and was developed to measure testable, ‘objective’ results on exams, such as mathematics, established by the teacher based upon the questions, before being adopted for more subjects. Moreover, it was originally established in the 1700’s to ‘rank’ students, not specifically to evaluate quality or ‘correctness’. (For an interesting history and discussion of this system, see this essay in Edutopia: https://www.edutopia.org/article/why-the-100-point-grading-scale-is-a-stacked-deck/)

While a student who gets every answer correct on a math exam, a perfect 100% score (A+ if you will), where all of the questions deal with concrete right or wrong answers, how could wine, even accepting (as I do) that some aspects such as balance of acid, pH and dry extract standards can be objectively measured, I don’t recognize how a wine can be ‘perfect’, especially when it is youthful, or even still in barrel in a Bordeaux Grand Cru chateaux?

I have had some legitimately ‘great’ or ‘outstanding’ wines over the years. But I can only use the word ‘perfect’, if at all, for wines at or near their peak of maturity (potential?) such as the 1949 Inglenook Estate Pinot Noir (in 2000), 1962 La Tâche Domaine de La Romanée Conti (1978-79), or JJ Prüm 1949 Wehlenur Sonnenuhr Feinste Auslese Riesling (1972).

For example, I greatly admire Decanter Magazine’s fine Bordeaux Correspondent Jane Anson for her acumen, clarity and deep knowledge, but to award 100 point perfect scores to five, three year old 2020 Bordeaux (for the record, Les Carmes Haut Brion, Troplong Mondot, Mouton Rothschild, Petrus & Trotanoy) seems ludicrous; like calling a three year old child perfect? Would it not be better to say these wines are outstanding and promise so much more ‘perfection’ in another 15-30 years?

Wine is, IMHO, only partly an objective subject. “Quality’ is a subjective opinion but an experienced critic or taster should have a better, critical perspective on what quality is and how it is expressed in terms of BLIC for the type of wine itself. The distortion the 100 point system brings to critiquing or judging wine has eliminated any perspective producers and consumers have that a wine given 80 points, if judged in a school situation, is a B; Very Good, Above Average.

Instead, in Winespeak, it is mediocre at best, possibly even flawed. How did we get to this idiotic stage using a meaningless guide to wine quality and character? What’s the difference between a 92 and a 94 point wine? They are both Excellent, possibly Outstanding, in the same manner that Da Vinci’s Last Supper & Rafael’s School of Athens are. It is ‘pointless’ to compare these two paintings. Different yet equally excellent in ways that only words and knowledge can convey.

Good wine judging and good wine critics used to write about what the wine tasted like, whether it was balanced, and where it fit into the grand scheme of wine. Judges with their awards, Writers, critics, as well as producers talked about wines descriptively, before making their evaluation known via understandable language; Excellent, Very good, Typical, Average.

Some writers still do, also with descriptive language, but in a world with short attention spans and a consumerist POV that has to ‘quantify’ everything, the brevity of the 100 point system and its association with competition and rank (let’s just call it ‘class’) means words don’t matter anymore in our fast-paced world.

The problem is magnified by the fact (or appearance of it) that today’s assemblage of critics, wine collectors, producers and others have been conditioned to the idea than only rich, powerful, concentrated, weighty wines are worthy of 95-100 point scores. Where is the 100 point Rosé de Provence in this scheme?

As Mr Woolf writes obliquely on the subject, “No whoopsies for when you thought the heavy bottle was a Napa Cabernet but turned out to be a Bonarda from a nouveau-riche estate in Argentina. No red cheeks because you confused finesse with lack of concentration, structure and flavor, and gave a top Burgundy a score in the mid-80s.”

I will leave you with these thoughts. If you read wine reviews, or rely on your local wine merchant or grocery store for wine recommendations, or competition medals as your means of selecting what wines to drink and purchase, reflect upon how much you rely on its score, without any other information or description, and decide whether the wine matches up with your own appraisal for the wine. Is a perfect wine really all that matters, even if it exists? When virtually everyone is playing the same score without providing at least some context, a .little skepticism is warranted. Agree to disagree with me. Even if you are just seeking out a simple Pinot Grigio, quality matters, and a point score is useless for telling you what to expect flavor-wise.